Netflix, eBay, Twitter, Paypal, Uber – these huge, household-name businesses all started out using traditional monolithic architectures.

But, as these organisations grew, they began to realise that using monoliths would no longer be viable for their operational needs. Thus, they turned to microservices, taking advantage of how this modern architecture can scale with rapid growth, constantly changing consumer demands, and unpredictable business environments.

Today, more companies are beginning to migrate or have migrated to a microservices-based architecture – turning what was initially an internet buzzword into the architecture of the modern, digital world.

And as more and more organisations begin adopting a microservice architecture, adopters are beginning to realise the agility, productivity, and the business value they derive from microservices... as well as the challenges that come with their implementation.

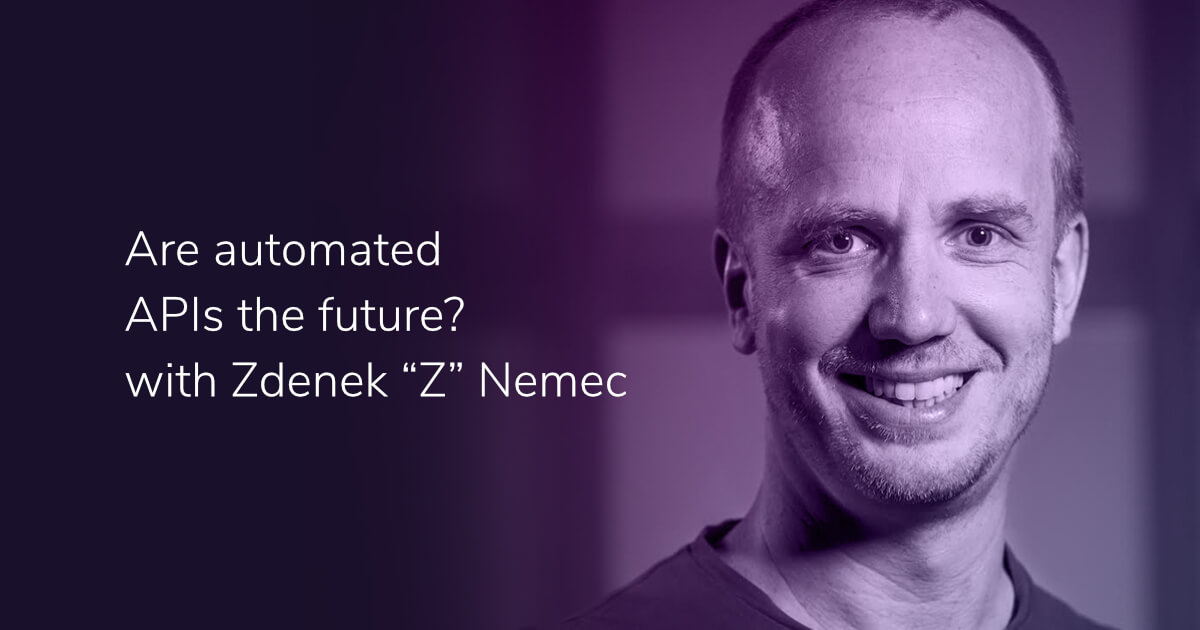

In this round of Cocktails we discuss microservices, how enterprises can better understand the complexities of their design, talk about their benefits, as well as what to consider when implementing this type of architecture.

Episode outline

- Rahul Rai explains how microservices have impacted software development.

- What advantages do microservice offer?

- How do container orchestration engines help manage the deployment of microservices?

- What is a Service Mesh and how does it facilitate microservices?

- What problems are often encountered when organisations try to implement microservices?

Transcript

Kevin Montalbo

Greetings, internet! My name is Kevin Montalbo. On today's round of Cocktails, we discuss microservices, how enterprises can better understand the complexities of their design, talk about their benefits, as well as what to consider when implementing this type of architecture.

Joining us today all the way from Australia is Toro Cloud CEO and founder and Cocktail co-host David Brown. Hi, David!

David Brown

Hi, Kevin!

Kevin Montalbo

And today's guest is a cloud architect, an engineering manager, and the technical team lead of the Australian fintech company, Zip. He has authored books on microservices orchestration and service mesh , microservices with Azure by Packt, Kubernetes Succinctly, and Istio Succinctly.

Sharing with us his knowledge and experience on microservices, also from Sydney, Australia, Rahul Rai. Good day, Rahul! Good to have you on.

Rahul Rai

Thank you.!

Kevin Montalbo

All right. How have microservices changed the way we do application development and integration?

Rahul Rai

So, I’ll present a slightly heavy answer. I'll begin with the development aspect of microservices first. So, a microservice typically has three building block components, which are the application binaries that developers write, the state which is where the data used by your application is stored, and the configuration which you deploy and update the application and the state with. Now you need to deploy, scale, and update each of these three components independently and how you do that is critical for the scalability and maintainability of the system.

Now, this can be a challenging problem to solve. And the choice of technology used to host each of the building blocks plays an important role in addressing this complexity. For example, if the code is developed using .NET code in the form of a web API and the state is stored in an external Azure SQL database, then the configuration that you write or the scripts for that matter for upgrading and scaling these services will have to handle, compute storage and network capabilities on both of these platforms simultaneously.

This is not so much of a big problem to solve now because modern microservices platforms, such as Kubernetes, offer a possible solution by colocating state and code together for the ease of management, which simplifies this problem to a great extent. Also, with the help of Kubernetes operators, for example, AWS service operator and Azure also has a counterpart, which is, for Azure, Service Operator, [which] you can deploy the state to a past service. So, you can still host your data, say, Azure SQL database, or in terms of an AWS LAN say, DynamoDB, and still deploy your applications to Kubernetes, but manage both of them using a single configuration using these operators, which help keep these configurations very simple.

Now, moving on to the integration aspect of microservices, I just like to highlight a few common integration models and how these models can help bind microservices together. So, one of the most common one, or not the most common, but one of the newer ones that's sort of a burning topic right now are composite user interfaces or micro front-ends. So, with this model, when you build your microservices, you ship your user interface along with it. And there could be an integration team that is involved that would plug all of these UI bits together. And some of the typical websites that implement this model are Facebook and Amazon’s e-commerce website.

Now composite frontends are not a viable solution for clients, the rich clients, such as mobile applications, where people need to have very niche skills in order to build such user interfaces. So for such applications can backend, most typically known as “backend for frontend”, BFF is built and teams solely focus on building a rich client. And for any data, they query this packet interface in order to serve the user interface.

Another model of integrating microservices, which is the most common one is synchronous communication. So, you use the rest to transfer JSON data over HTTP. Now this can also help in a service discovery by using hypermedia as the general application state. So support HATEOAS think that's how it is pronounced. So, it's a competitive risk, which models relationships between resources by using links. So, once the client queries the entry point of the service, it can use the links such as these to navigate to other microservices.

Another common integration pattern is asynchronous communication using services such as SQS or Azure Service Bus RabbitMQ that has the benefit of truly decoupling microservices from each other. And since the communication is carried out by a broker individual services may not be aware of the location of the receiver of the request. And this also gives individual services the ability to scale independently and recover and respond to messages in case of failure. However, this communication pattern lacks the feature of immediate feedback and a slower than synchronous communication format.

The last integration model that I would like to point out is data replication. So in this model, you share data between microservices by copying, by transforming the data that is available on a database onto another replica, which is used by another microservice. And a search application process can be triggered in batches or on certain events of the system, but the same data can be used by different services to carry out their own responsibility.

David Brown

There are a lot of points there. I just want to ask a couple of questions. Just on that last point, you said that the last integration pattern is to replicate the database, transform it and create a new database for which has the content required by different microservices. Now we know in a microservices world, we should have a unique database for each microservice, right?

So you're saying that instead of making a call to a separate microservice for the data it doesn't have, you'd replicate the data from that other database, transform it to whatever format that other microservice needs. Is that right?

Rahul Rai

The moment you try to couple microservices at any particular layer, they don't stay microservices anymore, because then what would happen is that you can't safely manage the data that is stored, persisted by the microservice, which is typically called a “state.” And you can expect the state to be consistent.

So, let's say if two microservices are sharing the same state, or let's go even granular, let's say they're sharing the data that's written in a particular table. Now you can't, at any point of time, safely modify any of these from the table or a dictator schema this table from any particular service because you bound the services together. So, then you can't call these services microservices, essentially because they break the basic tenets of microservices, which is that services can be independently deployed and versioned, because now they can't. You can't do that with these services.

So, in such cases, it's always a better choice to replicate the data so you don't have to actually copy data from one database to another by running heavy batch operations or SSI as jobs, or any other transformation service, that's it. You can stream data to other services through events. And through that another replica of that database can be rehydrated and that's what can be used by other services.

David Brown

So, I'm guessing that's only applicable in a read only situation. Like you're saying the unit conflicts, if you have two microservices connected to the same database and updating the data, right? So in that particular use case, I'm guessing that the microservice, which is getting that stream of data or the replicated database is only reading the data, right?

Rahul Rai

In a microservices world, a microservice is the source of truth for the domain that it is working on which essentially means that any other service can only rely on this data for reading purposes. It can’t actually write data and become the source of truth itself. So yes, that is the issue.

David Brown

So, there was one other point you made that was regarding, I think you called it the component frontend model for frontend application design. And you said it wasn't clickable to the rich clients like mobile applications. Can you tell us just a little bit more about how that's evolved? What have we evolved from to get to that point and how does it differ?

Rahul Rai

So, from the composite user interface to the back and front end? Typically, I mean, this is what I've seen happening most of the time generally in organisations, how their team says that they would build capabilities but then the organisation, so for example, there will be a team that specializes in serving and databases. There would be another team that specializes on the user interfaces, and then a bunch of programmers that bridge this gap between the user interface and the databases. If you form guilds like these, then what would happen is that in any requests that needs to be satisfied, for example, if you need to add a new front end to your application, and it requires some middleware processing and then some data to be solved through the database, and you need to go through all of these three teams. And the communication is generally very slow.

And in such organisations where teams are structured like this, it is very hard to realize the concept of microservices because microservices and domain-driven design actually go hand in hand when you need to structure your teams that have each of the capability that you require.

So, some members who specialize in user interface, some who specialize in building the middleware, for example, where VPIs or any of the processing system that you require in between, and then the database and you put them together so that whenever you have requirements, there is no gap in communication between these team members. That team structure can work very efficiently when you also make the user interface on microservice. So your user interface is not a model in itself but rather the team can work together and deliver the user interface along with the entire functionality that they're shipping.

So, this sounds alright as it's very easy to realize this with frameworks like Angular Libraries, like React, which give you this ability to build user interfaces like this, but then when it comes to mobile application development it's very hard to build mobile labs that are segregated like this. Of course you can use frameworks like Ionic or React Native to still somehow realize the composite user interface in mobile applications. So when we are talking about mobile applications that are built natively, so if you're building your Android apps using Java or, I'm not very good with mobile app dev, but using native language features, then obviously you can't build composite user interfaces there because people need to have very niche skills to develop such app.

So there, you can still build your mobile apps as a monolith, as in people who specialize in building such applications build that UI for you. But then for serving data, you rely on a PFF layer. It doesn't always need to be REST enabled as there are so many other technologies available. Now, for example, Graph QL is one of them. So you build this very thin library that sits behind these mobile applications, and developers can serve their data requirements by talking to this thin service library that sits behind the scenes, and then the rest of the services can be microservices that sit behind the BFF for this thin service.

David Brown

Thanks.

Kevin Montalbo

All right. I want to dig into this a little deeper. In our last podcast episode, we talked about taking a more modular approach rather than a monolithic one which is the better way for organisations to embark on their digital transformation journeys. Do you think that this modular approach is the way forward as well?

Rahul Rai

So, before we dismiss monolith I would just like to say that microservices are not a cure all. In fact, in most of the cases, monoliths are a better design choice and some monoliths such as the single process of modular monoliths have a whole lot of advantages as well. It is much simpler to deploy a monolith and it's much easier to avoid many of the pitfalls associated with distributed systems, such as transactions and latency and monoliths can result in much simpler developer workflows - monitoring, troubleshooting, and other activities like end to end testing as well. And in fact, in many organisations monoliths have a bad reputation only because the application concerns are poorly segregated, which makes it hard to decouple parts of the application and for developers to deploy them independently.

Now, that being said as monolith grows, as does yielding diminishing returns, and one of the biggest advantages of microservices is isolation. So isolation between services makes adoption of continuous delivery very simple. And this allows you to safely deploy applications and rollout changes and revert deployments in case of failures. Since services can be individually versioned and deployed, you can achieve significant savings in the deployment and testing of microservices, which is not the case with monoliths.

David Brown

You made an interesting point there. You said monolith is still a very valid design and microservices has its place, but monolith still very much has its place. So where do you see the distinction? When to choose a monolithic application design, when to choose a microservice model?

Rahul Rai

When the need comes as monoliths start growing, when you see that it starts addressing a host of concerns, then you start spreading the modules.

So, one of the things that I generally would like to call out is if the teams are very small and the application concerns are not massive, for example, we're building a simple web application, or even for that matter a small e-commerce store, you shouldn’t start with building services for each and every concern. And more so if your team is very small, then don't start with microservices because developers would be better off focusing their time and attention on a single code base and deploying it together than focusing on a tunnel surface and managing them. So start with a monolith, of course design it nicely so that things are nicely segregated and when the need comes, as the monolith starts growing and you see that it has started addressing a host of concerns, then you start spreading out the modules that it is made up of.

So think of it like Lego blocks. You start by putting all of these blocks together instead of building a single structure from it. But when the time comes, you can take a set of blocks and deploy it independently from everything else. And as the team grows and as the business concerns grow start segregating them. But you don't just jump into this with this idea that you will start off with microservices, even when the domain boundaries are not very clear and you don't know what you are going to do. So microservices give you this benefit of experimenting with something. And if it doesn't work out, you can throw it away, but so do monoliths ‘till the time they are small enough to experiment and to modify as the time evolves.

David Brown

I think there's some good advice there. I think that if the application design is done well from the outset, the monolithic design is modular so that it can be broken down into independent services in the future. You know, that's going to make your life a lot easier if you do ever want to evolve that code base into a microservices.

We're seeing a lot of projects taking that approach where the monolith has become too unwieldy, too unmanageable, and they want to break it down in independent services. And so we can provide tool sets to facilitate that, but you also make some valid points to say that if you don't have the capabilities, if you've got it working with a small team, and it's not just the development team, the dev ops team as well, maybe microservices, you're not ready for it yet. And that building a monolithic based application is going to be easier to maintain that code base, easier to deploy just give it some architectural considerations where it can be broken down into your vendor services in the future.

Kevin Montalbo

Yeah. So since we're on the topic of talking about microservices and their complexities, microservices are deployed in containers, right? So with a microservices based application, you can end up managing a lot of containers. How do container orchestration engines such as Kubernetes, AWS, ECS, and Docker Swarm help with managing these container-based microservices?

Rahul Rai

So, in the last answer that I presented, I spoke about isolation. So, I just want to get up on that idea. Ideally you want to run each microservice instance in isolation. Isolation ensures that one microservice doesn't affect the other. For example, one of the microservice gobbles up all the CPU, and then the rest of the services on the host staff. And virtualization is one of the ways to create isolated execution environments on existing hardware, but the typical virtualization techniques can be quite heavy. If you're talking about utilization techniques like Hyper V,they can be quite heavy when you consider the size of the microservices that tutor them. So containers on the other hand provide a much more lightweight way to provision isolated execution environments for services and they're also much faster to spin up and they are much more cost-effective for many architectures.

So on a single VM, there's a handful of Hyper V instances that you can spin up because they want each of them [inaudible] a copy of operating systems and the rest of the networking capability. But containers, you can spin up hundreds of thousands of containers on it if the VM is big enough. So after you began playing around with the containers, you will also realize that you need something to allow you to manage these containers across a lot of underlying machines and container orchestration platforms like Kubernetes do exactly that. It allows you to distribute container instances in such a way that it can provide you the robustness and throughput as per the demands of the service. It shows that the underlying machines are used to their fullest capacity.

So there isn't much vestige with respect to the CPU or the memory, but even with Kubernetes and containers for that matter, my advice remains the same. They don't feel the need to rush to adopt Kubernetes or even containers for that matter. So, even though they offer a significant advantage over more traditional deployment techniques, it is very difficult to justify it. If you have only a few services to host and after the overhead of managing deployment begins to become a significant headache, start considering containerization of your services and the use of Kubernetes.

But even if you end up using Kubernetes, just make sure that someone else's running the Kubernetes cluster for you, shows you the managed service on a public cloud provider, because running your own Kubernetes cluster can be a significant amount of work.

Kevin Montalbo

Well, what is the service mesh and how does it facilitate microservices?

Rahul Rai

So service mesh provides a policy-based and a services network that addresses East-West, which is a service to service networking concerns. And it takes care of concerns like security, resiliency, also visibility so that services can communicate with each other while offloading all of these network related concerns to the platform, which is the service mesh.

David Brown

I think at this point in time, Rahul, some people might be thinking that sounds an awful lot like an API gateway - the service, the concept of service discovery and routing requests to the correct microservice, whereas Kubernetes is saying, “Okay, you have an order service and we need to spin up more instances of that order service and I know where we can deploy those. And if a container goes down, I can spin up another one.”

Now we understand that the service mesh is more about that east-west communication of service discovery, so that the shopping cart service knows where the order service exists and how to communicate with it. But how does that differ from the functionality of an API gateway?

Rahul Rai

That's a very good point actually. And I heard of this question from quite a few people, but how I go about explaining it is that application gateways generally sit in front of your application. So, an application can be made up of multiple services. For example, the Amazon website. Now the Amazon website has, I don't know how many, but I'm sure to the tune of hundreds of services sitting behind it which power this experience. So, when we are talking about a service mesh, as I said, they are there to mostly address east-west networking concerns. So, how each of these individual services that are sitting behind the UI, how they communicate with each other and how they can maintain common concerns, for example, security, resiliency, and all those good things in a very consistent manner.

The API gateway actually sits in front of services, so it handles [inaudible] traffic which is between one application and the other. So, you have these common gateways that simply transfer all over after some transformation to an application and the application then uses its services in order to return a response via the gateway.

So, think of a gateway as something sitting at your domain boundary, but within your domain, you have a bunch of services that are talking to each other. So, both of these are complementary to each other because the application gateway doesn't need to sit in front of each and every service of your application stack. It just needs to manage the boundary of your domain.

I've seen implementations where the application gateway is also installed inside Kubernetes, but only used for establishing the domain boundary. So your domain has a bunch of other services, which use service mesh to talk to each other, but then any calls that go out of the domain or served to the services inside the domain come via the API gateway. Yeah. So that's the segregation between the two.

Kevin Montalbo

So, you're an experienced cloud architect. What problems do you often encounter when an enterprise tries to implement microservices in their organisation?

Rahul Rai

Using Conway’s Law in conjunction with the principles of the Domain Driven Design can actually help an organisation enhance its entity and design scalable and maintainable solutions.

About the most common challenge that I see, I would like to go back to the point that I made earlier, which is that I see that many organisations are not organised for building microservices and in order to put some facts around it I would like to talk about Conway's Law. So Conway's Law states that any organisation that designs the system will produce a design whose structure is a copy of the organisation’s communication structure. But many people think that the organisation’s communication structure in Conway's Law represents the organisation hierarchy. However, it rather represents how the various teams and organisations communicate with each other.

For example, an e-commerce company might have a product team and an invoicing team. And any application that is designed by this application will have a product module and an invoicing module that will communicate with each other through a common interface.

Now, I have seen massive architectural representations of such organisations, which are very complex and impossible to maintain and using Conway’s Law in conjunction with the principles of domain driven design can actually help an organisation enhance its entity and design scalable and maintainable solutions. For example, in an e-commerce company, teams may be structured around domain components rather than application layers that they specialise in. For example, user interface, business logic, and database is not how teams should be structured. You can have clearly structured domains that you've set up teams across these domains. And then the teams won't need to interact with each other too frequently.

Also, the interface between teams will not be too complex and rigid. And teams, which are segregated by domain, such team layouts are very commonly employed by successful organisations that have implemented microservices, which all of us emulate.

Amazon and Netflix for instance, each team within such organisations are responsible for creating and maintaining part of the domain. They are not structured around a specialization as such. So the concept of domain driven design brings me to another point that I would like to make here. And most of the young organisations or organisations that are trying to tackle a new problem or find that no one fully understands the domains of microservices that they want to make. Without such understanding it is a very costly mistake to make as making the wrong choice. Segregating the domains might lead to solving some of the most complex problems of distributed computing in the future, for example, distributed transactions, data consistency, and latency.

So I recommend that organisations take some time to discover the domain using tools such as brainstorming workshops or domain discovery workshops before embarking on the journey to brave microservices.

David Brown

You talked about the domain driven design and having teams working on the microservice, which are responsible for that domain. And that makes a whole lot of sense, but there are going to be other teams from other domains which are going to be relying on the services of someone else's team. So what do you recommend in terms of a team responsible for a domain liaising with the stakeholders that are going to be consuming my service?

Rahul Rai

Take some time to discover the domain using tools such as brainstorming workshops or a domain discovery workshops before embarking on the journey to brave microservices.

So, that's actually a valid thing. And something that I spoke about is that when you segregate teams by domain, then you reduce the communication that needs to happen between these teams. So for example, if let's say I, as a person, am working on the user interface and you're working on the backend. Let's say if we are teams on our own, then in order to get a single feature across, there would need to be a lot more communication that needs to happen between you and me than if we were part of the same team.

So, you reduce these organisation chitchats that go on by a significant margin if you structure the teams that don't need to talk to each other that often. Now, of course, when teams rely on each other for a certain data, then they can establish contracts and satisfy those contracts rather than always having to go back and forth and have very fluid contracts established between them.

David Brown

By contracts, you mean API contracts?

Rahul Rai

The contracts can be for a lot of things. Not just an API contract. So, they can also include things like the mode of communication or the escalation hierarchy, things like the organisation structure as well. So API contracts can only serve data to inform our microservice, but then how would you go about monitoring and how would you go about escalating to the right set of people with problems? So there are also contracts that need to be established.

Kevin Montalbo

All right, thank you very much for being with us. Please feel free to invite our listeners to view or read your work. Where can they find your work?

Rahul Rai

So, I blog very frequently at my website. The URL is TheCloudBlog.net. So, you can find me there and all the links to my socials and my email is available over there. So, people can use those links.

Kevin Montalbo

All right! Thanks, Rahul. David, thanks for joining us as well. You can listen to our previous episodes which are available for streaming or downloaded on your favorite podcast platforms. Also ,visit our website at www.torocloud.com for our blogs and our products.

We're also on social media - Facebook, LinkedIn, YouTube, Twitter, and Instagram. Just look for TORO Cloud. Thank you very much for listening to us today. This has been Kevin Montalbo, David Brown, and Rahul Rai for Coding Over Cocktails.

Show notes:

- The Cloud Blog

- Rahul Rai on LinkedIn

- Rahul Rai on GitHub

- Rahul Rai on Twitter